The problem

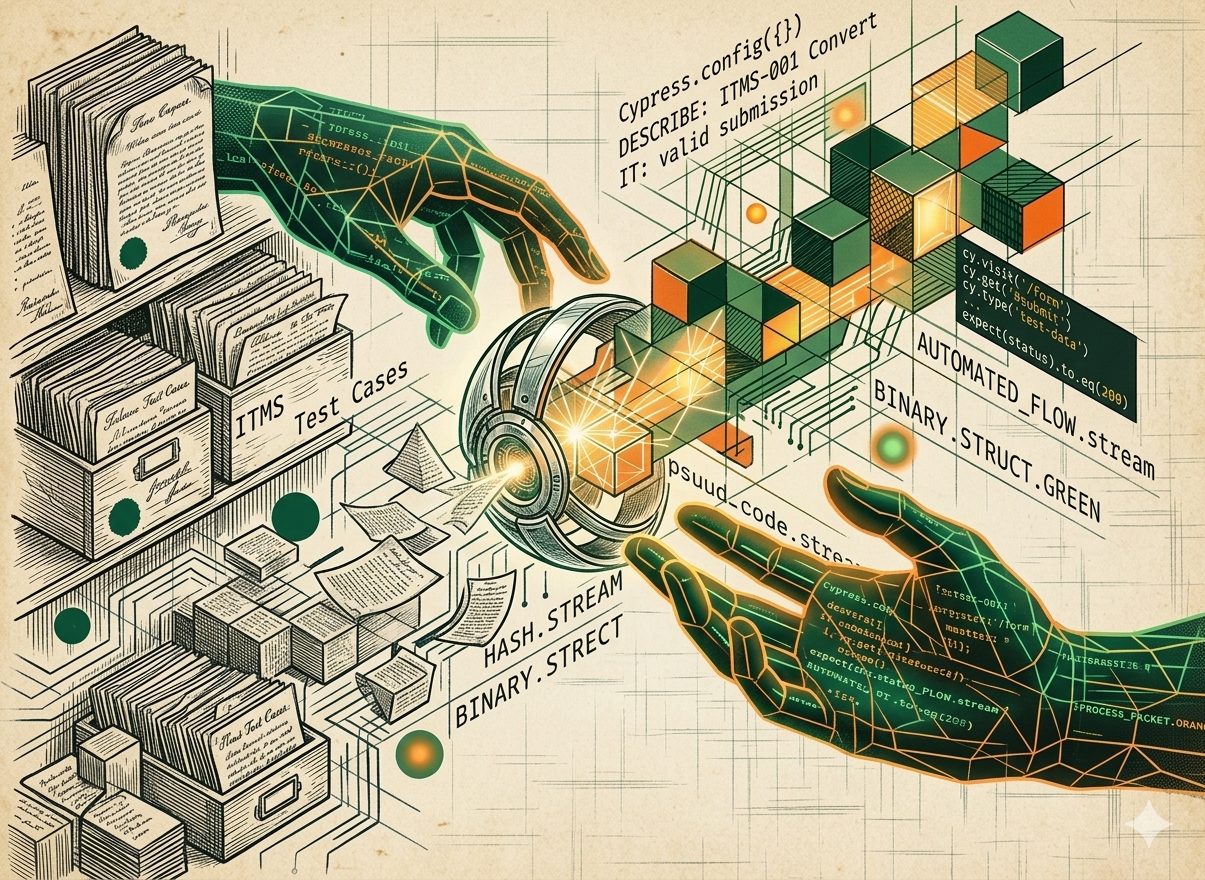

This experiment does not primarily challenge the habit of writing short test cases. The real issue lies elsewhere: test cases in ITMS are written in natural language, while Cypress (the tool that actually executes the tests) requires them in a programming language. This creates a structural gap between test design and test automation.

The purpose of the experiment is to bridge that gap. By using AI to translate natural-language test cases from ITMS into executable Cypress code, we aim to automate the transition from functional description to technical implementation, without losing clarity, consistency, or intent.

Research question

We explored a simple but impactful question.

Can AI generate Cypress automation code directly from ITMS test cases and make test automation faster and more consistent?

Our AI experiment focused on understanding whether AI can translate ITMS test cases into working Cypress automation code. At the same time, we evaluated whether this approach could speed up test automation while improving consistency across tests.

Experiment

To explore how AI can improve test automation workflows, we built an integration between Infodation’s own testing tool, ITMS, and the testing framework Cypress. The goal was to automatically generate Cypress code templates directly from the test cases listed in each ITMS ticket.

Using AI, the system analyzes structured test cases and converts them into automation-ready code that follows the repository’s existing coding style and conventions. This approach ensures consistency while reducing the manual effort normally required to translate functional test cases into automated scripts.

By embedding AI into the workflow, we aimed to bridge the gap between test design and automation execution. Making it faster for teams to move from documented scenarios to runnable tests while maintaining quality and alignment with established development standards.

Results

Two insights stood out clearly:

- AI can generate Cypress automation code from ITMS test cases. A precondition for a successful outcome is a high quality of test cases in iTMS. When steps, conditions, and expected outcomes are described clearly and completely, the generated code is usable and structurally sound.

- Quality in equals quality out. The clarity and completeness of the test cases directly determine the quality of the automation code. Vague or minimal test cases result in incomplete or unreliable scripts, while detailed test cases lead to stronger automation.

What This Means for Our Work

For ITMS, this experiment demonstrates how AI can streamline the automation workflow and reduce the manual workload around test implementation. Instead of spending time translating functional scenarios into technical scripts, engineers and QA teams can focus on refining test coverage, identifying edge cases, and improving overall system reliability. The value lies not just in code generation itself, but in accelerating the path from validated requirements to maintainable automated tests.

Looking Ahead

This experiment opens the door to a new workflow. Test cases can be written first in ITMS, with automation code generated directly from them. In the future, updates to test cases could automatically trigger updates to Cypress tests, keeping manual and automated testing continuously aligned.

From Test Case to Automation

This experiment shows that when test cases are writting clearly and in detail, AI can successfully generate Cypress automation code from ITMS test cases. By improving how we document test scenarios, we can speed up automation, increase consistency, and let AI support engineers in building and maintaining reliable test suites.